Type: GitHub Repository Original link: https://github.com/Olow304/memvid Publication date: 2025-09-04

Summary #

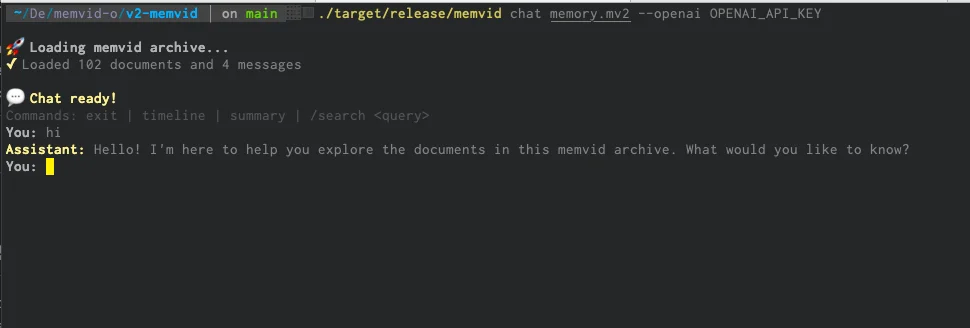

WHAT - Memvid is a Python library for managing AI memory based on video. It compresses millions of text fragments into MP4 files, enabling fast semantic searches without the need for databases.

WHY - Memvid is relevant for AI business because it offers a portable, efficient, and infrastructure-free memory solution, ideal for offline-first applications and those with high portability requirements.

WHO - Memvid is developed by Olow304, with an active community on GitHub. Indirect competitors include traditional database-based memory management solutions and vector databases.

WHERE - Memvid positions itself in the AI memory solutions market, offering an innovative alternative based on video compression. It is particularly relevant for applications that require portability and infrastructure-free efficiency.

WHEN - Memvid is currently in the experimental phase (v1), with a clear roadmap for version v2 that introduces new features such as the Living-Memory Engine and Time-Travel Debugging.

BUSINESS IMPACT:

- Opportunities: Integration with Retrieval-Augmented Generation (RAG) systems to improve memory management in AI applications. Possibility of offering portable and offline-first memory solutions to clients.

- Risks: Competition with traditional database-based and vector database memory solutions. Dependence on the maturity and stability of version v2.

- Integration: Memvid can be integrated with the existing stack to improve memory management in AI applications, leveraging its efficiency and portability.

TECHNICAL SUMMARY:

- Core technology stack: Python, video codecs (AV1, H.266), QR encoding, semantic search.

- Scalability: Memvid can handle millions of text fragments, but scalability depends on the efficiency of the video codecs used.

- Architectural limitations: Video-based compression may not be optimal for all types of textual data, as highlighted by the community.

- Technical differentiators: Use of video codecs for text data compression, portability and infrastructure-free efficiency, fast semantic search.

Use Cases #

- Private AI Stack: Integration into proprietary pipelines

- Client Solutions: Implementation for client projects

- Development Acceleration: Reduction of project time-to-market

- Strategic Intelligence: Input for technological roadmap

- Competitive Analysis: Monitoring AI ecosystem

Third-Party Feedback #

Community feedback: The community has expressed concerns about the efficiency of the proposed compression method, noting that video codecs are not optimal for textual data such as QR codes. Some users have also discussed the performance and latency of alternative solutions.

Resources #

Original Links #

- Memvid - Original link

Article recommended and selected by the Human Technology eXcellence team, processed through artificial intelligence (in this case with LLM HTX-EU-Mistral3.1Small) on 2025-09-06 10:47 Original source: https://github.com/Olow304/memvid

The HTX Take #

This topic is at the heart of what we build at HTX. The technology discussed here — whether it’s about AI agents, language models, or document processing — represents exactly the kind of capability that European businesses need, but deployed on their own terms.

The challenge isn’t whether this technology works. It does. The challenge is deploying it without sending your company data to US servers, without violating GDPR, and without creating vendor dependencies you can’t escape.

That’s why we built ORCA — a private enterprise chatbot that brings these capabilities to your infrastructure. Same power as ChatGPT, but your data never leaves your perimeter. No per-user pricing, no data leakage, no compliance headaches.

Want to see how ready your company is for AI? Take our free AI Readiness Assessment — 5 minutes, personalized report, actionable roadmap.

Related Articles #

- RAGFlow - Open Source, Typescript, AI Agent

- GitHub - GibsonAI/Memori: Open-Source Memory Engine for LLMs, AI Agents & Multi-Agent Systems - AI, Open Source, Python

- MemoRAG: Moving Towards Next-Gen RAG Via Memory-Inspired Knowledge Discovery - Open Source, Python

FAQ

Can open-source AI tools be used safely in enterprise?

Absolutely. Open-source models like LLaMA, Mistral, and DeepSeek are production-ready and used by major enterprises. The key is proper deployment: running them on your own infrastructure ensures data privacy and GDPR compliance. HTX's PRISMA stack is built to deploy open-source models for European businesses.

What's the advantage of open-source AI over proprietary solutions?

Open-source AI offers three key advantages: no vendor lock-in, full transparency into how the model works, and the ability to run entirely on your infrastructure. This means lower long-term costs, better privacy, and complete control over your AI stack.