Type: X (Twitter) Post Original link: https://x.com/sudoingx/status/2030344904774402115?s=43&t=ANuJI-IuN5rdsaLueycEbA Publication date: 2026-03-23

Summary #

Introduction #

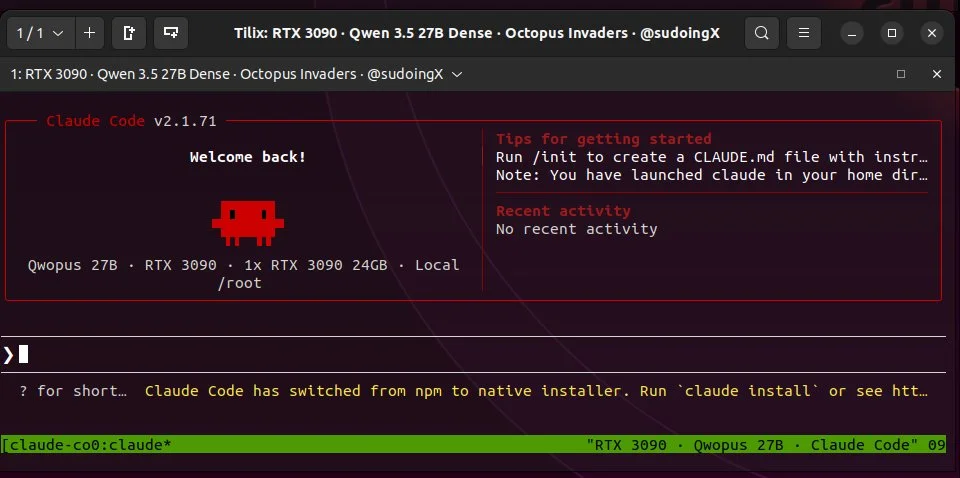

Have you ever spent an entire day testing a new tool and then realized it’s exactly what you’ve been looking for? This is what happened to Francesco Menegoni, who spent the whole day testing Qwopus, a distilled version of Claude 4.6 Opus in Qwen 3.5 27B, on a single RTX 3090 graphics card. The result? Such a positive experience that it became his new favorite for local hosting. But what makes Qwopus so special? And why should you consider integrating it into your workflow? Let’s find out together.

Qwopus represents a significant step forward in the world of AI tools, offering high performance and impressive stability. If you are a developer or a tech enthusiast, this article will guide you through the main features of Qwopus, its context, and how it can be used to enhance your projects. Get ready to discover a new way of working with AI.

The Context #

Qwopus is a distilled version of Claude 4.6 Opus, an advanced AI model, in Qwen 3.5 27B. This distillation process allows for a lighter and faster model without sacrificing performance. The idea behind Qwopus is to make AI accessible and performant even on less powerful hardware, such as a single RTX 3090 graphics card.

The problem that Qwopus addresses is the efficient management of computational resources. Many AI tools require high-end hardware to function correctly, making it difficult for developers and small businesses to access these technologies. Qwopus, on the other hand, offers a solution that is both powerful and accessible, allowing complex models to run on more affordable hardware. This fits perfectly into the current tech ecosystem, where resource optimization and scalability have become fundamental priorities.

Why It’s Interesting #

Performance and Stability #

One of the most impressive features of Qwopus is its stability. During the tests, Francesco Menegoni noted the absence of crashes, a common problem with other AI tools. Additionally, the “thinking” mode works natively, allowing for a more fluid and natural interaction with the model. This is a significant advantage for those working with AI, as it reduces the time spent on debugging and improves workflow efficiency.

Speed and Efficiency #

Qwopus offers high performance, with a processing speed ranging from 29 to 35 tokens per second. This is particularly impressive considering that the model is running on a single RTX 3090 graphics card. The ability to handle large amounts of data efficiently is crucial for many AI projects, and Qwopus seems to excel in this aspect.

Accessibility #

Another strong point of Qwopus is its accessibility. Being optimized to run on less powerful hardware, it allows a greater number of developers and companies to access advanced AI technologies. This is an important step towards the democratization of AI, making these technologies available to a wider audience.

How It Works #

Using Qwopus is relatively simple. The model has been distilled to run on a single RTX 3090 graphics card, meaning that high-end hardware is not required to achieve high performance. The setup is straightforward: just load the model and start working. There are no complicated prerequisites or lengthy configurations, making it ideal for those who want to get started quickly with AI.

For those already familiar with Claude Code, integrating Qwopus is even simpler. The model is designed to work natively with Claude Code, allowing for a smooth and uninterrupted interaction. This is a significant advantage for those already using this tool, as no additional learning or complex configuration is required.

Final Thoughts #

Qwopus represents a significant step forward in the world of AI tools. Its ability to offer high performance on less powerful hardware makes it an ideal solution for developers and small businesses. The stability and efficiency of Qwopus make it a reliable option for those working with AI, allowing for reduced time spent on debugging and improved workflow efficiency.

In a world where resource optimization and scalability have become fundamental priorities, Qwopus offers a solution that is both powerful and accessible. This tool has the potential to democratize AI, making these technologies available to a wider audience. If you are a developer or a tech enthusiast, it is worth taking a look at Qwopus and seeing how it can improve your projects.

Use Cases #

- Private AI Stack: Integration into proprietary pipelines

- Client Solutions: Implementation for client projects

Resources #

Original Links #

- spent the entire day testing Qwopus (Claude 4 - Original link

Article reported and selected by the Human Technology eXcellence team, processed through artificial intelligence (in this case with LLM HTX-EU-Mistral3.1Small) on 2026-03-23 08:47 Original source: https://x.com/sudoingx/status/2030344904774402115?s=43&t=ANuJI-IuN5rdsaLueycEbA

Related Articles #

- GitHub - z-lab/paroquant: [ICLR 2026] ParoQuant: Pairwise Rotation Quantization for Efficient Reasoning in Large Language Model Inference - AI, LLM, Machine Learning

- My head of SEO, Claude Coworker - Tech

- Introducing MagicPath, an infinite canvas to create, refine, and explore with AI - AI

The HTX Take #

This topic is at the heart of what we build at HTX. The technology discussed here — whether it’s about AI agents, language models, or document processing — represents exactly the kind of capability that European businesses need, but deployed on their own terms.

The challenge isn’t whether this technology works. It does. The challenge is deploying it without sending your company data to US servers, without violating GDPR, and without creating vendor dependencies you can’t escape.

That’s why we built ORCA — a private enterprise chatbot that brings these capabilities to your infrastructure. Same power as ChatGPT, but your data never leaves your perimeter. No per-user pricing, no data leakage, no compliance headaches.

Want to see how ready your company is for AI? Take our free AI Readiness Assessment — 5 minutes, personalized report, actionable roadmap.

FAQ

How can AI improve software development productivity in my company?

AI coding assistants can dramatically accelerate development — from code generation to testing to documentation. However, using cloud-based tools like GitHub Copilot means your proprietary code is processed externally. Private AI coding tools on your infrastructure keep your codebase secure while boosting developer productivity.

What are the security risks of AI-assisted coding?

Studies show AI-generated code has 1.7x more major issues and 2.74x higher security vulnerabilities. The solution isn't avoiding AI — it's pairing AI assistance with proper code review, security scanning, and private deployment to prevent IP leakage.