Type: Web Article Original link: https://www.nature.com/articles/s41586-025-09422-z Publication date: 2025-02-14

Summary #

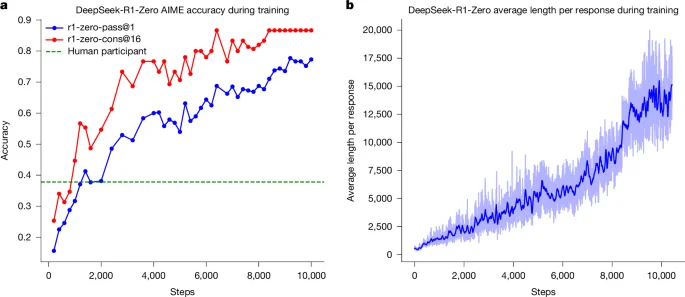

WHAT - The Nature article describes DeepSeek-R1, an AI model that uses reinforcement learning (RL) to enhance the reasoning capabilities of Large Language Models (LLMs). This approach eliminates the need for human-annotated demonstrations, allowing models to develop advanced reasoning patterns such as self-reflection and dynamic strategy adaptation.

WHY - It is relevant because it overcomes the limitations of traditional techniques based on human demonstrations, offering superior performance in verifiable tasks such as mathematics, programming, and STEM. This can lead to more autonomous and high-performing models.

WHO - Key players include the researchers who developed DeepSeek-R1 and the scientific community that studies and implements advanced AI models. The GitHub community is active in discussing and improving the model.

WHERE - It positions itself in the market of advanced AI, specifically in the sector of Large Language Models and reinforcement learning. It is part of the research and development ecosystem of artificial intelligence models.

WHEN - The article was published in February 2025, indicating that DeepSeek-R1 is a relatively new but already established model in academic research.

BUSINESS IMPACT:

- Opportunities: Integration of DeepSeek-R1 to enhance the reasoning capabilities of existing models, offering more autonomous and high-performing solutions.

- Risks: Competition with models using advanced RL techniques, potential need for investment in research and development to maintain competitiveness.

- Integration: Possible integration with the existing stack to improve the reasoning capabilities of corporate AI models.

TECHNICAL SUMMARY:

- Core technology stack: Python, Go, machine learning frameworks, neural networks, RL algorithms.

- Scalability: The model can be scaled to improve reasoning capabilities, but it requires significant computational resources.

- Technical differentiators: Use of Group Relative Policy Optimization (GRPO) and bypassing the supervised fine-tuning phase, allowing for more free and autonomous model exploration.

Use Cases #

- Private AI Stack: Integration into proprietary pipelines

- Client Solutions: Implementation for client projects

- Development Acceleration: Reduction in time-to-market for projects

Third-Party Feedback #

Community feedback: Users appreciate DeepSeek-R1 for its reasoning capabilities, but express concerns about issues such as repetition and readability. Some suggest using quantized versions to improve efficiency and propose integrating cold-start data to enhance performance.

Resources #

Original Links #

Article recommended and selected by the Human Technology eXcellence team, processed through artificial intelligence (in this case with LLM HTX-EU-Mistral3.1Small) on 2025-09-18 15:08 Original source: https://www.nature.com/articles/s41586-025-09422-z