Type: GitHub Repository Original link: https://github.com/rasbt/LLMs-from-scratch Publication date: 2025-09-04

Summary #

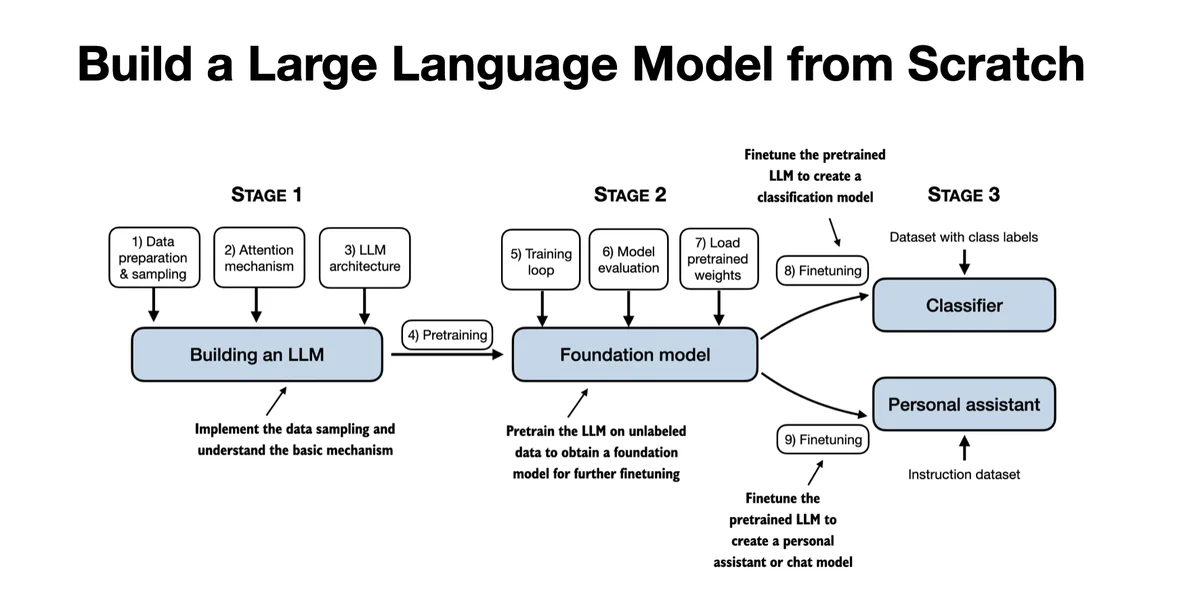

WHAT - This is a GitHub repository containing the code to develop, pre-train, and fine-tune a large language model (LLM) similar to ChatGPT, written in PyTorch. It is the official code for the book “Build a Large Language Model (From Scratch)” by Manning.

WHY - It is relevant for AI business because it provides a detailed and practical guide to building and understanding LLMs, allowing the replication and adaptation of advanced natural language processing techniques. This can accelerate the development of customized models and improve internal expertise.

WHO - The main actors are Sebastian Raschka (author of the book and the repository), Manning Publications (publisher of the book), and the community of developers on GitHub who contribute to and use the repository.

WHERE - It positions itself in the market of education and development of LLMs, offering practical resources for those who want to build advanced language models. It is part of the PyTorch ecosystem and is aimed at developers and researchers interested in LLMs.

WHEN - The repository is active and continuously evolving, with regular updates. It is a consolidated but growing project, reflecting current trends in LLM development.

BUSINESS IMPACT:

- Opportunities: Accelerate the development of customized language models, improve internal expertise, and reduce training costs.

- Risks: Dependence on a single repository for training, risk of obsolescence if not regularly updated.

- Integration: Can be integrated into the existing AI development stack, using PyTorch and other technologies mentioned in the repository.

TECHNICAL SUMMARY:

- Core technology stack: PyTorch, Python, Jupyter Notebooks, and various natural language processing frameworks.

- Scalability: The repository is designed for education and prototyping, not for industrial scalability. However, the techniques can be scaled using cloud infrastructures.

- Technical differentiators: Detailed implementation of attention mechanisms, pre-training, and fine-tuning, with practical examples and exercise solutions.

Use Cases #

- Private AI Stack: Integration into proprietary pipelines

- Client Solutions: Implementation for client projects

- Development Acceleration: Reduction of project time-to-market

- Strategic Intelligence: Input for technological roadmap

- Competitive Analysis: Monitoring AI ecosystem

Third-Party Feedback #

Community feedback: Users appreciate the shared resources for building and understanding language models, with a general consensus on the usefulness of the guides and implementations. The main concerns are about the complexity and accessibility of fine-tuning techniques, with requests for further specific tutorials for natural language processing tasks.

Resources #

Original Links #

- Build a Large Language Model (From Scratch) - Original link

Article suggested and selected by the Human Technology eXcellence team, processed through artificial intelligence (in this case with LLM HTX-EU-Mistral3.1Small) on 2025-09-04 19:22 Original source: https://github.com/rasbt/LLMs-from-scratch

Related Articles #

- AI Engineering Hub - Open Source, AI, LLM

- LoRAX: Multi-LoRA inference server that scales to 1000s of fine-tuned LLMs - Open Source, LLM, Python

- MCP-Use - AI Agent, Open Source