Type: GitHub Repository Original link: https://github.com/HKUDS/AI-Researcher Publication date: 2025-09-24

Summary #

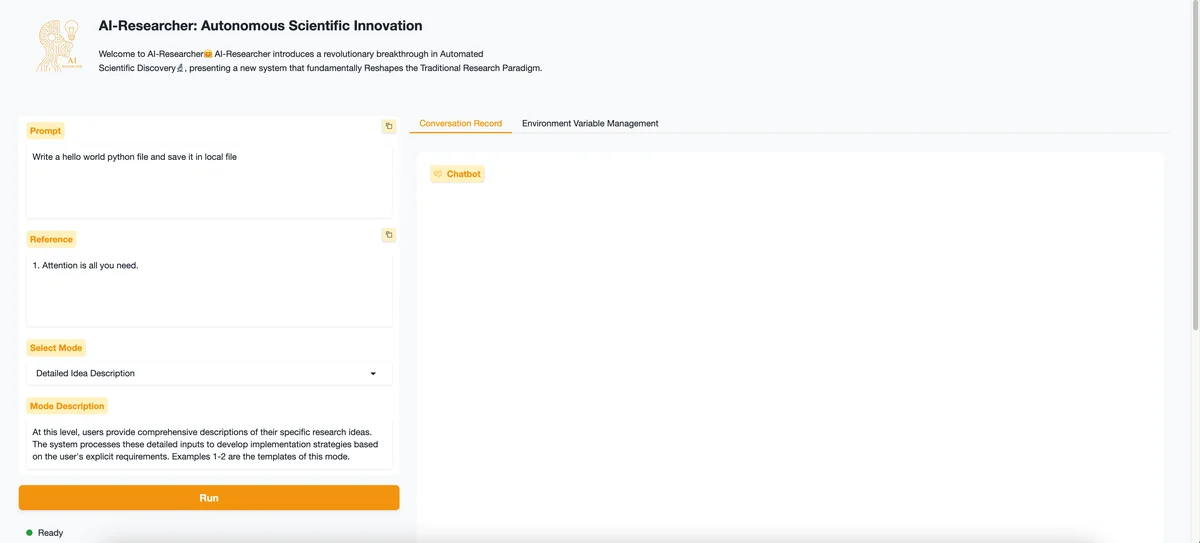

WHAT - AI-Researcher is an autonomous scientific research system that automates the research process from concept to publication, integrating advanced AI agents to accelerate scientific innovation.

WHY - It is relevant for the AI business because it allows for the complete automation of scientific research, reducing the time and costs associated with the discovery and publication of new knowledge.

WHO - The main players are HKUDS (Hong Kong University of Science and Technology Department of Systems Engineering and Engineering Management) and the community of developers contributing to the project.

WHERE - It positions itself in the market of AI solutions for scientific research, offering a complete ecosystem for research automation.

WHEN - It is a relatively new project, presented at NeurIPS 2025, but already in a production-ready version, indicating rapid development and adoption.

BUSINESS IMPACT:

- Opportunities: Automation of scientific research to accelerate the production of publications and patents.

- Risks: Competition with other automated research platforms and dependence on external AI models.

- Integration: Possible integration with research management tools and scientific publication platforms.

TECHNICAL SUMMARY:

- Core technology stack: Python, Docker, Litellm, Google Gemini-2.5, GPU support.

- Scalability: Uses Docker for container management, allowing horizontal scalability. Architectural limits may include the management of large volumes of data and dependence on external APIs.

- Technical differentiators: Full autonomy, seamless orchestration, advanced AI integration, and research acceleration.

USEFUL DETAILS:

- AI models used: Google Gemini-2.5

- Hardware configuration: Support for specific GPUs, configurable for multi-GPU use.

- APIs and integrations: Uses OpenRouter API for access to completion and chat models.

- Documentation and support: Presence of detailed documentation and active community on Slack and Discord.

Use Cases #

- Private AI Stack: Integration into proprietary pipelines

- Client Solutions: Implementation for client projects

- Development Acceleration: Reduction of project time-to-market

- Strategic Intelligence: Input for technological roadmaps

- Competitive Analysis: Monitoring AI ecosystem

Resources #

Original Links #

- AI-Researcher: Autonomous Scientific Innovation - Original link

Article suggested and selected by the Human Technology eXcellence team, processed through artificial intelligence (in this case with LLM HTX-EU-Mistral3.1Small) on 2025-09-24 07:35 Original source: https://github.com/HKUDS/AI-Researcher

Related Articles #

- nanochat - Python, Open Source

- Tongyi DeepResearch: A New Era of Open-Source AI Researchers | Tongyi DeepResearch - Foundation Model, AI Agent, AI

- paperetl - Open Source